This post is based on the article “Statistical Issues in Forensic Science” by CSAFE Co-Director Hal Stern of UCI. The full article was published in March 2017.

The way that forensic evidence and other expert testimony is presented in court is determined by legal precedent, especially the 1993 U.S. Supreme Court decision in Daubert v Merrell Dow Pharmaceuticals, and Rule 702 of the Federal Rules of Evidence. These sources identify the trial judge as the “gatekeeper” to identify which testimony to allow, and set the expectation that such testimony “is based on sufficient facts or data” and “is the product of reliable principles and methods”. A 2009 National Research Council (NRC) report and a 2016 report of the President’s Council of Advisors on Science and Technology (PCAST) question whether a number of forensic science disciplines satisfy these expectations. Each calls for additional research to support the science underlying the analysis of forensic evidence.

A multidisciplinary approach is required to carry out such research because each discipline requires technical expertise to collect and prepare evidence and additional expertise to analyze and interpret the resulting data. Statistics has a crucial role to play in helping to address the challenges of forensic science. Examples of the way that statisticians and the field of statistics can contribute are described below.

Reliability and validity

Perhaps the most important challenge facing forensic science after the 2009 NRC and 2016 PCAST reports is the need for data that assesses the reliability and validity of forensic examinations and conclusions. Reliability refers to whether forensic measurements or judgements would be obtained consistently. This includes considerations of repeatability, in which one asks if the same forensic examiner would draw the same conclusion if presented with the same evidence at a different time, and considerations of reproducibility, in which one asks if the same conclusion or measurement would be obtained by other examiners. Reliability is an important first consideration but even if forensic examinations in a particular domain are reliable, that does not indicate whether they are valid or accurate. If a fingerprint examiner concludes that a latent print at the crime scene comes from the same source as a test impression made by the suspect (this conclusion is sometimes known as an identification), we need to know how accurate that conclusion is to make an informed judgment about the weight of evidence. The PCAST report carefully discussed what should be expected in validation studies (e.g., the true status of the samples should be known, the samples should be representative of casework, etc.).

PCAST indicated that statistical methods are well developed and have been validated for single source DNA evidence (or simple mixtures) and for latent print analysis, but reported that additional studies are needed regarding the validity of forensic examinations in other forensic science disciplines. Assessing the reliability and validity of forensic examinations is a key step in developing sound science to measure the strength of evidence and statisticians can contribute to the design and analysis of such studies.

Task-relevant information and cognitive biases

Scientists are humans, not robots. As such, the field of forensic science, as any other domain involving human judgements, has the potential to be compromised by cognitivebias and other cognitive factors which can influence how data is collected, analyzed and findings are communicated. Cognitive bias is a term meant that refers to disconnects between observed behavior, and what would be considered by most as rational decision making.

There are a number of well-known cognitive biases that have been identified in other areas of human decision-making. One example is known as confirmation bias, where examiners may tend to favor interpretations that confirm their own preconceptions about a suspect or the evidence. Another example are framing effects, where a forensic examiner may evaluate evidence differently depending on what contextual information about the suspect or the crime scene was provided.

Statisticians can play a role in teams that study cognitive bias and in teams that try to determine what information should be deemed relevant for a particular task.

Causal inference

Forensic examiners working in crime scene investigation, arson and blood spatter analysis attempt to reconstruct the events leading up to a crime based on evidence found at the crime scene. This process is an effort to infer the causes of observed effects. These examiners face a challenging task because it is difficult to carry out realistic controlled experiments that would allow one to reliably distinguish between competing explanations. For example, the problem of determining if a fire developed naturally or by the use of an accelerant.

Statistical collaboration with practitioners in relevant disciplines will be valuable in strengthening inferences in these settings.

Case processing and procedures

Any process can benefit from careful analysis to understand potential limitations, bottlenecks or sources of error, and the forensic evidence analysis process is no exception.

Crime laboratories are often faced with an overwhelming workload, leading to backlogs that can slow the treatment of evidence. At the same time, maintaining quality forensic examinations requires a quality assurance program that incorporates reanalysis of evidence or verification of conclusions.

Statistical methods can play a role in designing quality assurance programs that can improve efficiency of lab operations while simultaneously insuring the accuracy of conclusions.

Testifying on forensic evidence

Appropriate ways for presenting forensic evidence analysis in the courtroom is an important area of research. Several studies demonstrate the difficulty that jurors can have in understanding statistical ideas like the likelihood ratio and Bayes factor. The forensic science research community is still examining the best way to presnt such evidence in the courtroom.

Statisticians have an important role to play in developing approaches to presenting quantitative evaluations of evidence and in the design and analysis of juror studies to assess the effectiveness of alternative approaches.

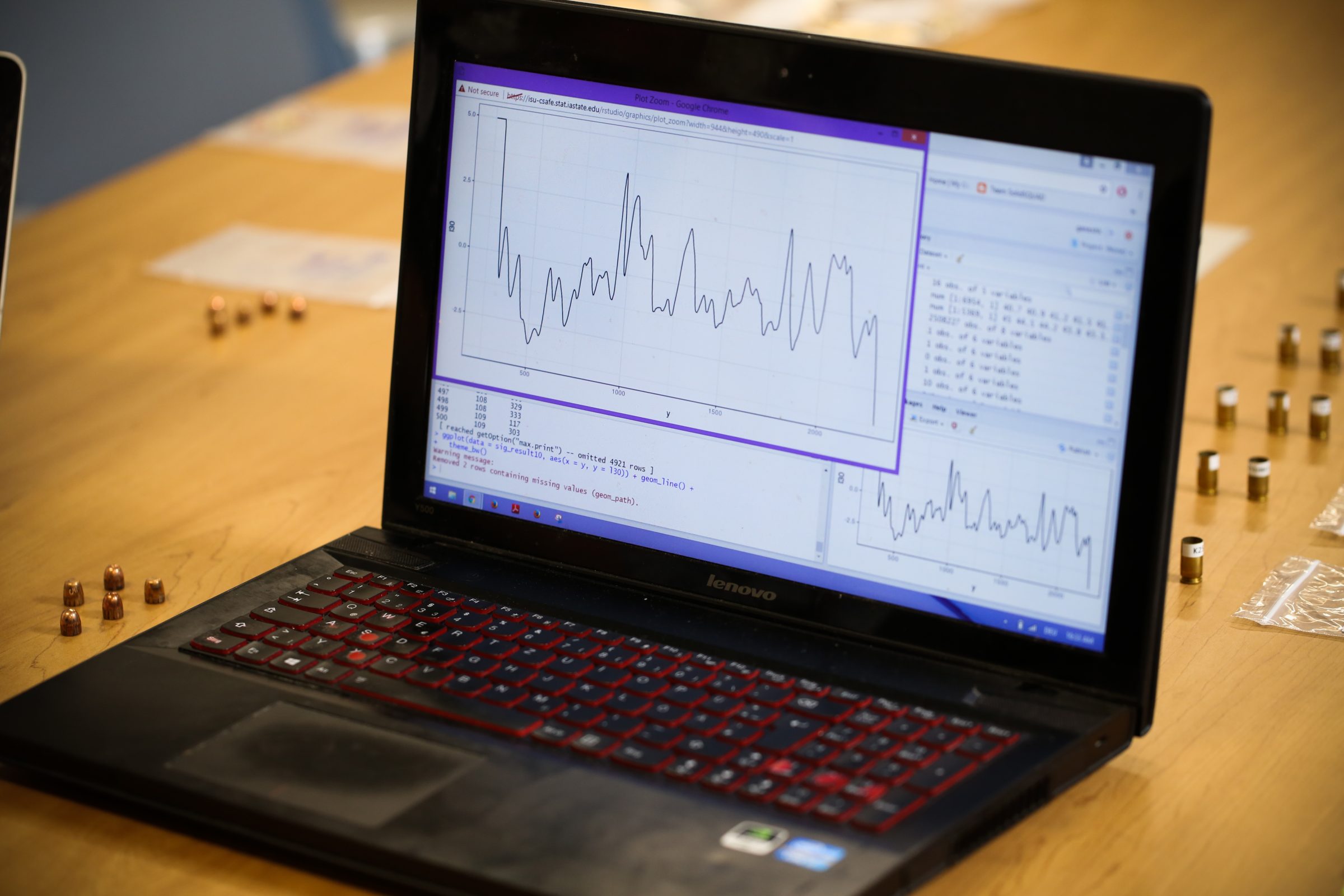

How CSAFE Research is Advancing the Field of Forensics

Statisticians at the Center for Statistics and Applications in Forensic Evidence (CSAFE) work in partnership with NIST researchers, forensic science practitioners and scientists in a variety of disciplines to address the issues raised in the NRC and PCAST reports.

Our team is contributing to strengthening the statistical foundations of pattern and digital evidence through the study of existing methods and the development of new statistical to analyze and interpret data and evidence. Our goal is to help forensic scientists in their pursuit of reliable and accurate analyses of forensic evidence.

Learn more about our research.

This post is based on the article “Statistical Issues in Forensic Science” by CSAFE Co-Director Hal Stern of UCI. The full article was published in March 2017.