In recent years, several black box studies have been conducted that attempt to estimate the error rates of firearm examiners. Most of these studies show very small error rates overall. However, what has received little attention is the actual calculation of these error rates, particularly the effect of inconclusive findings on those error rates.

A study led by researchers at the Center for Statistics and Applications in Forensic Evidence (CSAFE) revisited several of the most cited black box studies on firearms examination, investigating the treatment of inconclusive results.

The study, published in Law, Probability and Risk, was led by Heike Hofmann, professor of statistics and professor-in-charge of the Data Science Program at Iowa State University; Susan VanderPlas, assistant professor of statistics at the University of Nebraska-Lincoln and corresponding author of the study; and Alicia Carriquiry, CSAFE director and a Distinguished Professor and President’s Chair in statistics at Iowa State.

For each black box study, the authors calculated the error rates from the study results using standardized methods. They also assessed the impact of the study design and treatment of inconclusives on the calculated error rates. These studies varied in structure, having closed-set or open-set data, and were also conducted in different regions, either in the United States and Canada or Europe.

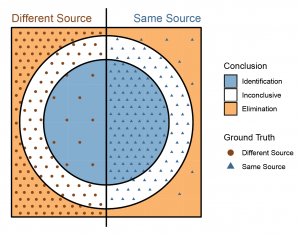

However, the researchers found the most relevant difference was how each study treated inconclusive results. There were three main ways the inconclusive decisions were treated in calculating error rates. The first option was to exclude the inconclusive from the error rate. The second option was to include the inconclusive as a correct result. And the third option was to include the inconclusive as an incorrect result.

The authors proposed a fourth option on how to treat inconclusive decisions. They suggested treating the inconclusive results the same as eliminations, and the error rates would be calculated for the examiner and the process separately.

After inspecting the studies, the researchers found that examiners tended to lean towards identification over inconclusive or elimination. In addition, they were far more likely to reach an inconclusive with different-source evidence, which should have been an elimination in nearly all cases. They also found that process errors occurred at higher rates than examiner errors.

The authors also discovered that study design issues create a bias toward the prosecution. In many study designs, it is not possible to calculate an error rate for eliminations, but it is possible to calculate an error rate for identifications. This asymmetry is due to the difficulty in determining how many non-match comparisons examiners completed during study designs where there are multiple known sources in the same kit.

The authors recommend conducting larger studies, including many examiners and evaluations, and following specific design criteria.

“It seems clear from our assessment of the currently available studies that there is significant work to be done before we can confidently state an error rate associated with different components of firearms and toolmark analysis,” the study reported.

Download the journal article and read insights from this study at https://forensicstats.org/blog/2020/10/20/insights-treatment-of-inconclusives-in-the-afte-range-of-conclusions/.

Hofmann, VanderPlas and Carriquiry discussed this study during a CSAFE-hosted webinar. Watch it at https://forensicstats.org/blog/portfolio/treatment-of-inconclusive-results-in-error-rates-of-firearm-studies/.

To learn more about how error rates for pattern comparison disciplines are estimated in black box studies, attend the upcoming CSAFE webinar, Shining a Light on Black Box Studies, on April 22 from 11 a.m.–noon CST. The webinar is free and open to the public. Register at https://forensicstats.org/event/webinar-shining-a-light-on-black-box-studies/.