A recent study from the Center for Statistics and Applications in Forensic Evidence (CSAFE) analyzed the outcomes from one latent print unit’s blind quality control program. The results from the study highlight the usefulness of blind proficiency testing and the potential role for incorporating quality metrics into blind testing programs and routine casework.

The study, published in Forensic Science International, was led by Brett Gardner, a research associate from the University of Virginia; Maddisen Neuman, a quality/research associate at Houston Forensic Science Center; and Sharon Kelley, an assistant professor at the University of Virginia.

Gardner, Neuman and Kelley reviewed 376 latent prints submitted as part of 144 blind test cases over a two-and-a-half-year period. In these cases, examiners determined that nearly all latent prints examined were of sufficient quality to enter into their Automated Fingerprint Identification System (AFIS). Examiners also committed no false positive errors and only two false negative errors when the AFIS candidate list returned the correct source print. However, examiners judged that 41 percent of the test prints had no match, despite the source being in AFIS.

In addition to studying results from the blind proficiency tests, the researchers also explored the relationships between print quality and case outcomes. They used a widely available quality metrics software for fingerprint examiners that rates the quality of a print image on a scale of 0–100, with higher scores indicating higher quality.

They found that prints that resulted in correct Preliminary AFIS Associations were of the highest quality and most clear, followed closely by prints that resulted in correct No Hit conclusions. Prints that did not result in AFIS searches were of the lowest quality and least clear.

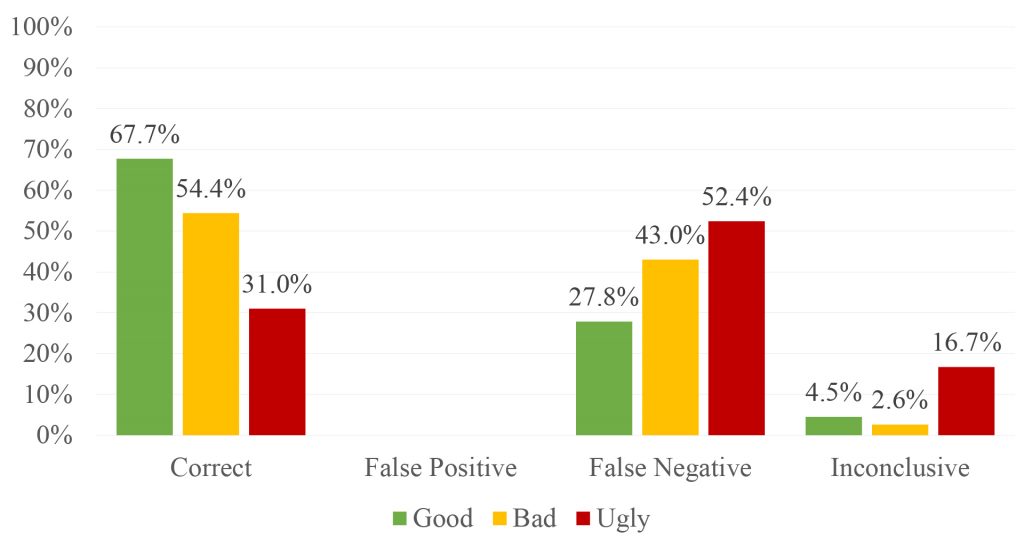

The authors state that print quality, categorized by Good, Bad or Ugly, was significantly associated with examiner conclusions and ultimate accuracy. The study reported that Good latent prints were more than twice as likely to result in correct conclusions than Ugly ones, while Ugly prints were three times more likely to result in inconclusive conclusions than Good ones.

The study also suggests that results from the blind quality control program and the quality metrics point to potential limitations in how AFIS is used by examiners. The correct source for prints submitted to AFIS appeared in the top 10 results only 41.7 percent of the time, lower than an estimated 53.4 percent of the time based on the quality of such prints.

Gardner said that overall, these results highlight the utility of blind quality assurance programs as a supplement to open proficiency testing in identifying areas that need improvement. “When properly implemented, such programs have the potential to more effectively test the accuracy of the entire system, including AFIS, compared to standard proficiency tests,” he said.

He said the research also suggests a potential part for including quality metrics into blind testing programs and routine casework. “Quality metrics offer objective indicators that could help ensure blind cases closely resemble standard casework, and there could be merit in screening prints for quality as the first step in analysis,” Gardner said.

View and download the journal article at https://forensicstats.org/blog/portfolio/latent-print-quality-in-blind-proficiency-testing-using-quality-metrics-to-examine-laboratory-performance/.

View insights from this study at https://forensicstats.org/blog/2021/06/02/insights-latent-print-quality-in-blind-proficiency-testing/.

Learn more about CSAFE’s research on latent print analysis at https://forensicstats.org/latent-print-analysis/.