**This is a guest post from The Innocence Project Researchers**

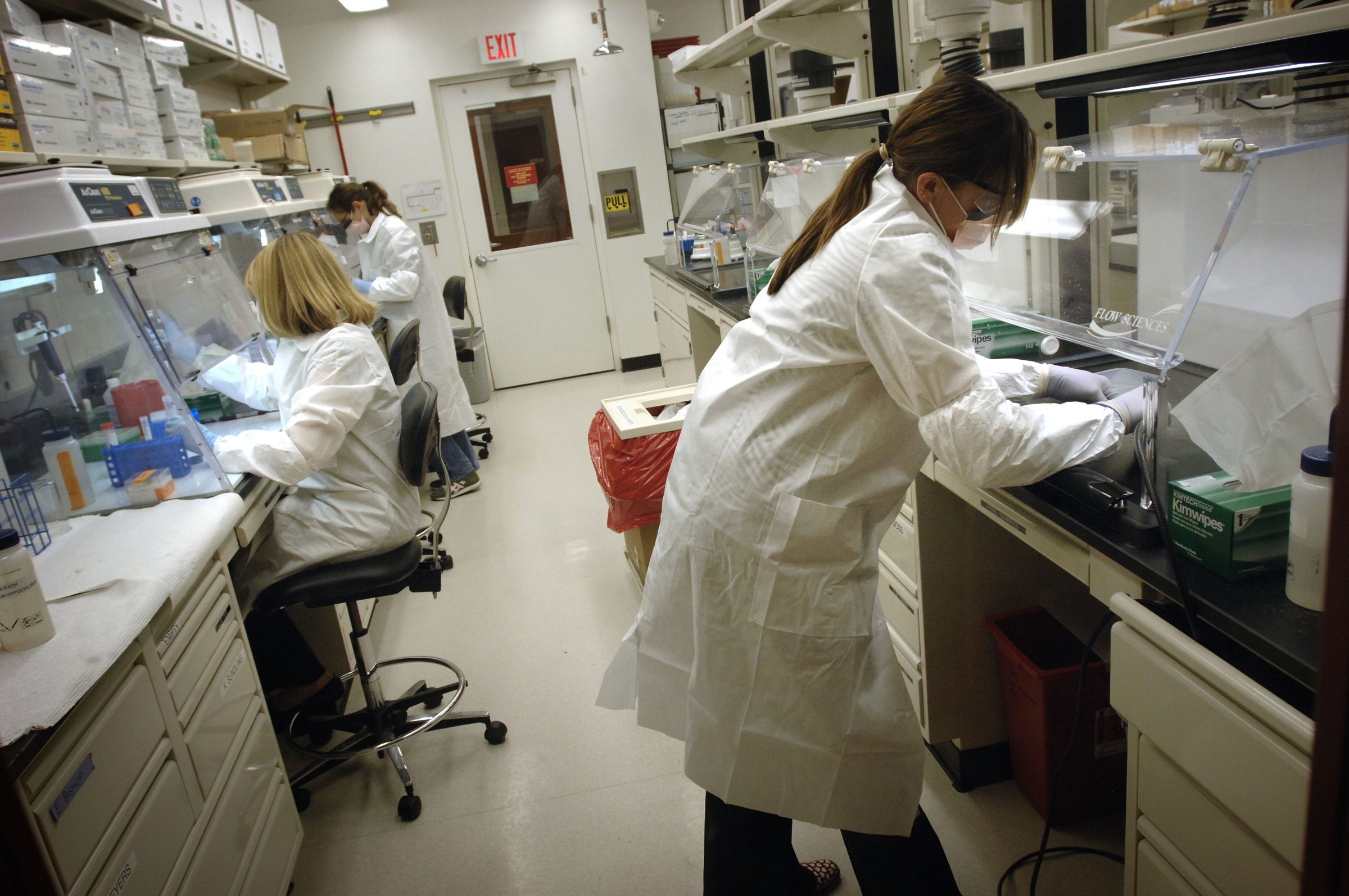

Can cognitive biases, which are a common feature of human decision-making, affect the outcome of forensic evidence analysis? An April 2019 review article by researchers from the Innocence Project shows that yes, even well trained and experienced forensic scientists may be susceptible to confirmation bias.

“Confirmation bias” is the tendency we all have to look for and remember information that matches our initial impressions or beliefs and to discount contradictory information. “Cognitive bias research in forensic science: A systematic review” by Glinda Cooper and Vanessa Meterko examines confirmation bias in the context of the evaluation of forensic evidence.

The review, published in Forensic Science International, encompasses 29 studies covering 14 different forensic disciplines. These studies explored, for example, whether case information irrelevant to the forensic testing influenced analysts’ conclusions. For instance, one study asked whether the type of clothes found with a skeleton could affect forensic anthropologists’ conclusions regarding the sex of the skeleton based on their analysis of the bones.

Other studies examined the process used to choose samples for comparison, like whether a crime scene hair sample is compared to hair from a single suspect or to a “line-up” of samples from several people, similar to a line-up used for eyewitness identification.

Study results indicate that laboratories can take preventative steps to avoid situations that can make analysts vulnerable to confirmation bias. Limiting access to unnecessary information, such as whether a suspect confessed, is one such strategy. Other measures include using multiple comparison samples, and blinding analysts to any previous evidence evaluation results.

One of the key recommendations of the 2009 National Academy of Sciences report, Strengthening Forensic Science in the United States: A Path Forward, was to encourage research on human observer bias and human error. Researchers have heeded this recommendation, evidenced by this review article ranking among the most downloaded articles in this journal.

CSAFE researchers are also investigating techniques to limit the impact of human factors, and distinguish between task-relevant and irrelevant information for forensic scientists. We look forward to collaborating with the forensics community as we work together to promote increased accuracy in forensic evidence analysis.